Motivation and Project Overview

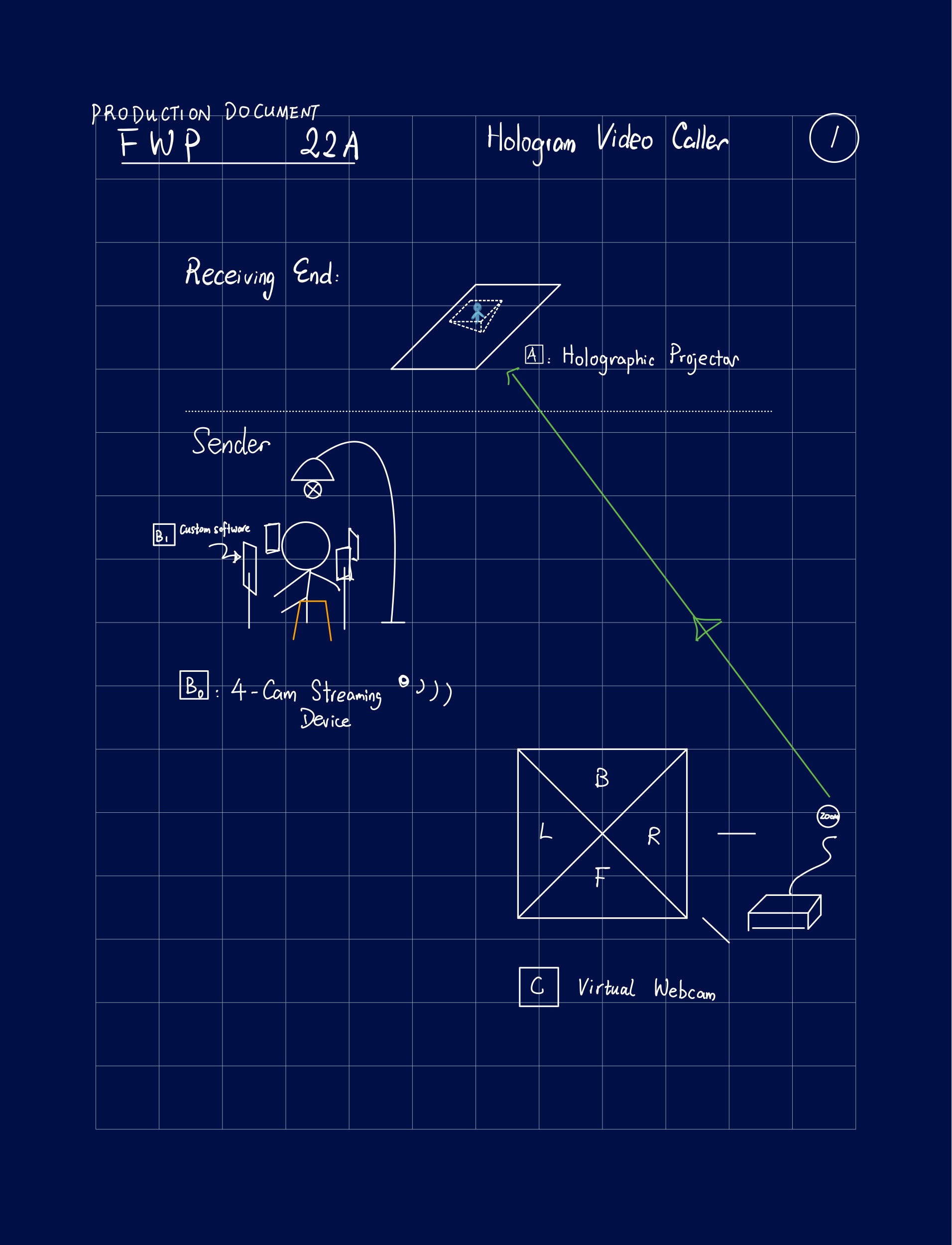

Like how the people in the Star Wars universe take for granted, I want to bring hologram video calling into reality (albeit mono-directionally for the time being), using the current video conferencing infrastructure, and keeping the finished product as open-source as possible.

Executively speaking, this project makes use of a typical pyramidal hologram projector and real-time video stream synthesis using OpenCV and Python. It will be broken down into three (four) distinct tasks.

- A. Design and manufacturing of projectors for various devices.

- B0. Deployment of a custom iOS app that on each device

- collects video feed,

- syncs time,

- performs necessary image corrections like perspective change, and

- compresses and transmits video streams over wired connections.

- B1. Evaluation of lighting and camera mounting positions.

- C. Real-time video synthesis fed into virtual camera software.

The first page of my early notes is attached.

(Code and design blueprints will be available on GitHub after the design phase ends)

Progress

A.

Trivial.

B 0.

Not yet implemented.

B 1.

Not yet implemented. I have 4 idevices that can at least capture at 1080p.

C.

6 Feb 2022

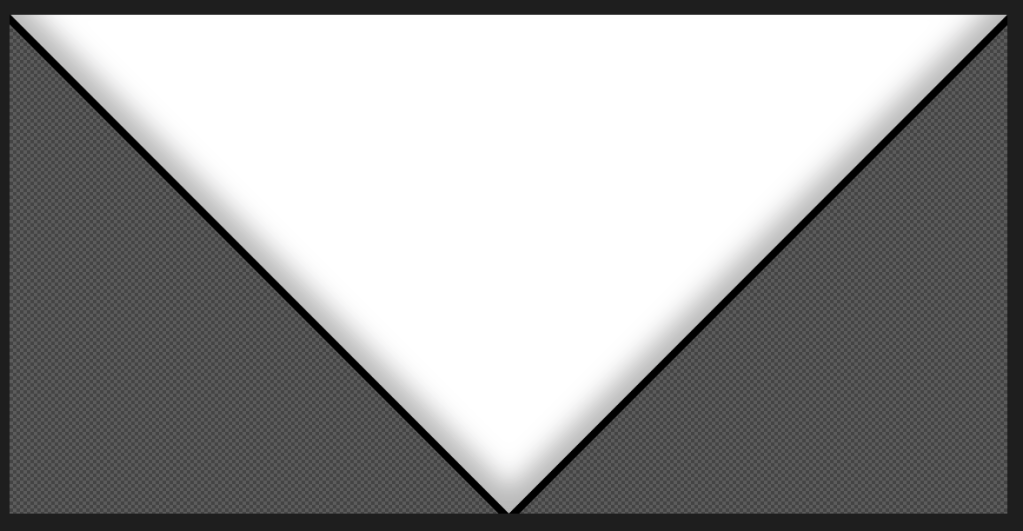

I used OpenCV to stitch four feeds together and broadcast the result into Zoom as a virtual camera. Before further optimization efforts, I currently make use of a transparent PNG of a right-isosceles triangle.

Using this picture, pixels in the video feeds whose coordinates match the mask is transparent are discarded, and the resulting four triangles are combined using Numpy magic.

The inner shadow effects may be able to be multiplied back into the video stream and provide a vignette effect, but that is not implemented in the current pipeline.

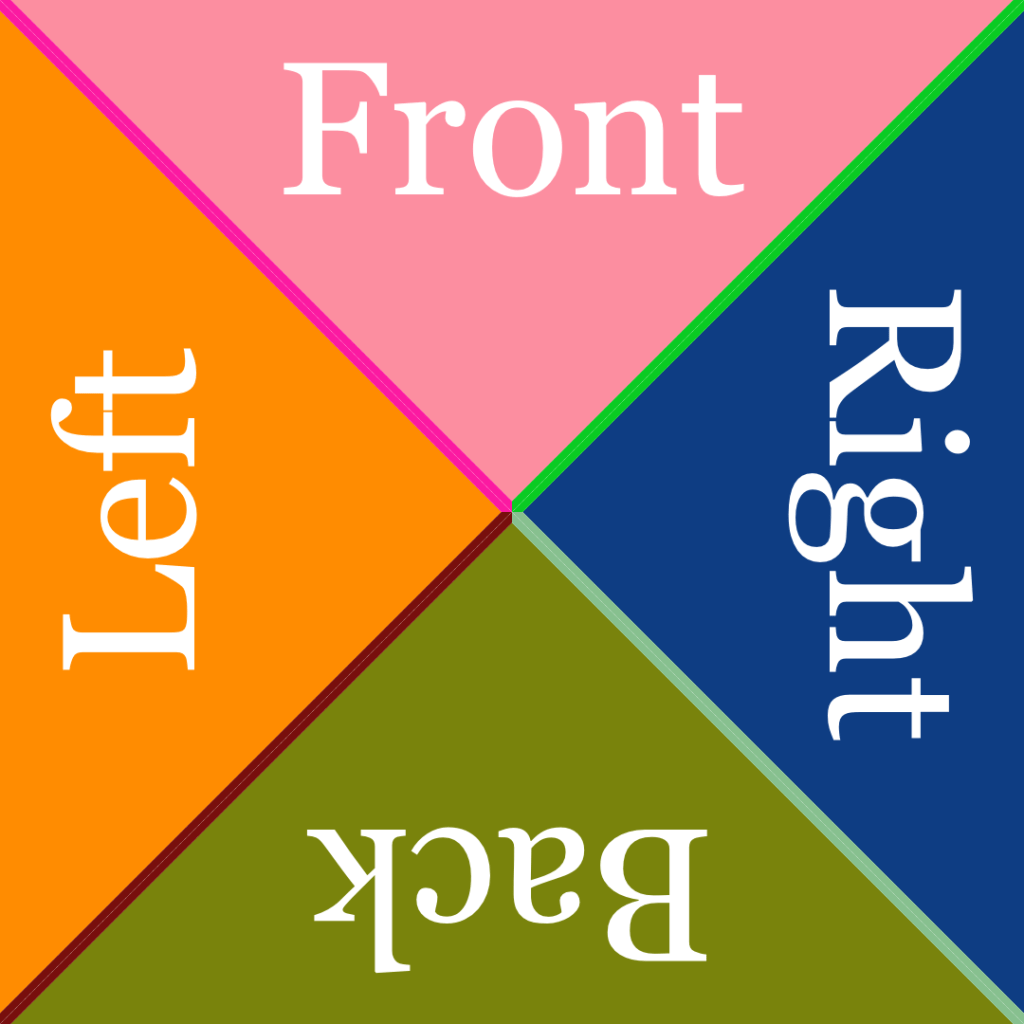

In all, the system can output 1080 × 1080 images like this at slightly over 50 frames per second.

And here’s the synthesized feed on a receiving Internet terminal, static but refreshing at 30 FPS.